Here’s something they don’t tell you when you’re buying that $1,200 camera or downloading the latest photo editing app:

The problem isn’t your skin. The problem is the camera was never built with you in mind.

For decades—decades—the entire imaging industry spent its energy making white skin look flawless on film. Not “skin” in general. Not “people” in general. White skin. Light skin. Pale skin. That specific complexion became the literal standard against which every camera, every film stock, every digital sensor, every algorithm was calibrated.

And if your skin didn’t match that standard? Well, that was your problem to solve.

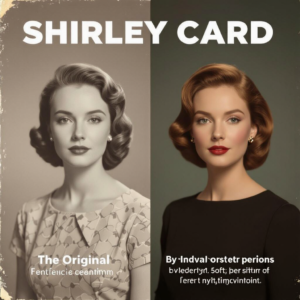

Meet Shirley: The Woman Who Defined “Normal”

In the 1950s, Kodak introduced something called Shirley cards—reference photos used to calibrate color in photo labs. These cards showed a white woman. Always a white woman. With light skin, brown hair, and what the industry decided was the “normal” range of skin tones worth capturing accurately.

Her name was Shirley. And for decades, she was the benchmark.

Photographers and lab technicians would use these Shirley cards to adjust color balance, contrast, and exposure. If Shirley’s skin looked good—peachy, luminous, properly exposed—then the film stock was doing its job. If the prints showed her with accurate skin tones and good detail in the highlights and shadows, the color processing was correct.

But here’s the thing: Shirley wasn’t just a reference. She became the reference. The only reference.

So what happened when darker skin showed up on that same film stock? It appeared underexposed. Flat. Muddy. Lost in shadow. Details disappeared. Richness vanished. Because the system wasn’t designed to see it, capture it, or render it beautifully.

It wasn’t a bug. It was the design.

The industry optimized everything—chemical formulas, exposure latitude, color science—around making light skin look good. Darker skin tones? Completely ignored. Not factored into the equation. Not part of the “normal” range worth accommodating.

The Digital Age Didn’t Fix Anything—It Made It Worse

You’d think when cameras went digital, this problem would disappear. New technology, new possibilities, fresh start, right?

Wrong.

Wrong.

Digital cameras carried that same legacy forward like inherited trauma. The engineers building autoexposure algorithms and white balance systems trained them on datasets—and those datasets were dominated by lighter skin tones. Same bias, new format.

When your phone’s camera tries to figure out the “correct” exposure, it’s looking at the scene and making calculations based on what it was taught is “normal.” And what it was taught is normal is—you guessed it—light skin.

So when you take a selfie and your face comes out looking two shades darker than it actually is, or your features blend into shadow, or the camera brightens the background but leaves you underexposed, that’s not accidental. That’s the algorithm doing exactly what it was designed to do: optimize for light skin and treat everything else as an edge case.

Early photo filters and beauty apps made it even more obvious. “Beautify” meant lighten. “Smooth skin” meant erase texture and reduce melanin. “Enhance” meant make you look closer to the Shirley card standard—lighter, brighter, whiter.

Even when these apps claimed to be “beauty tools,” the underlying message was clear: Your natural skin tone isn’t beautiful enough. Let us fix it by making you look less like yourself.

Facial Recognition: When Beauty Bias Becomes Dangerous

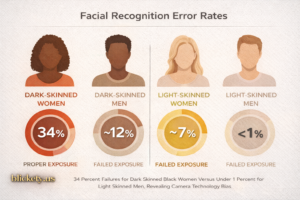

This isn’t just about bad selfies or frustrating photo shoots. This bias has real consequences.

Facial recognition systems—the ones used by law enforcement, airport security, and surveillance networks—show the same legacy. Multiple studies have found that face recognition algorithms have significantly higher error rates for darker-skinned individuals, especially Black women.

A landmark study by MIT researcher Joy Buolamwini found that some commercial facial recognition systems had error rates as high as 34% for dark-skinned women, compared to less than 1% for light-skinned men.

Thirty-four percent.

Think about what that means. These systems are being used to identify suspects, grant access, verify identity—and they literally cannot see Black faces accurately because the training data focused overwhelmingly on white faces.

When the beauty industry’s bias becomes law enforcement’s tool, people’s lives are at stake. Wrongful arrests. Denied access. Invasive surveillance that doesn’t even work correctly but still gets deployed in Black neighborhoods.

All because the people building these systems started with the same assumption that Kodak made in the 1950s: white skin is the default, and everything else is optional.

The Chocolate vs. Furniture Problem

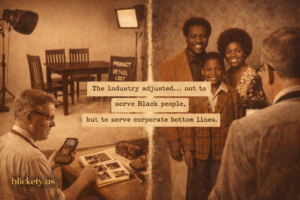

In the 1970s, there was finally some pushback—but not because of civil rights. Not because of justice. Not because someone suddenly cared about how Black people looked in photographs.

Because furniture companies and chocolate manufacturers complained.

See, the same film stocks that couldn’t properly capture darker skin tones also struggled with dark wood furniture and brown chocolate products. And when you’re trying to sell expensive mahogany dining sets or advertise chocolate bars, and your product photography makes everything look muddy and indistinct, that’s a problem.

So the industry adjusted. They created new film stocks with better sensitivity to darker tones—not to serve Black people, but to serve corporate bottom lines.

It worked for furniture and chocolate. But for human skin? The old biases persisted. Because there wasn’t enough economic pressure to change, and the people making decisions about what “normal” looked like weren’t the ones being erased from photographs.

Why Your Ring Light Isn’t Enough

Walk into any beauty influencer’s setup and you’ll see ring lights, softboxes, diffusers, and a whole ecosystem of lighting equipment designed to make people look good on camera.

But here’s what they don’t tell you: Most of that equipment is also designed with light skin in mind.

The “perfect lighting” tutorials you see online? They’re optimized for skin that reflects light a certain way. The three-point lighting setups that are supposed to be “universal”? They work great if your skin tone matches the industry standard. If it doesn’t, you’re often fighting against the equipment.

Darker skin requires different lighting strategies—more light overall, careful attention to fill light to bring out detail, thoughtful use of reflectors. Not because darker skin is “harder to photograph.” Because the entire system was built assuming it wouldn’t be in the frame.

Professional photographers who specialize in photographing Black skin know this. They’ve developed techniques, invested in specific equipment, learned to work around the industry’s limitations. But the average person with a smartphone shouldn’t have to become a lighting expert just to take a decent photo.

What’s Finally Starting to Change

After decades of this nonsense, people are finally trying to fix it. And by “people,” I mean mostly the folks who’ve been dealing with this problem their whole lives and got tired of waiting for the industry to care.

Google’s Real Tone is one of the most visible efforts. It’s an ongoing project to tune Pixel cameras and editing tools so they represent a much wider range of skin tones more accurately—not just the lighter ones.

They rebuilt their image processing algorithms from the ground up, trained models on diverse datasets, worked with photographers who specialize in darker skin tones, and actually tested their tools on real people with real melanin.

The results? Photos where darker skin actually looks like skin—rich, detailed, properly exposed. Where the camera doesn’t automatically brighten and flatten. Where beauty filters don’t default to “make you lighter.”

Other companies are slowly following. Apple’s newer iPhones have improved computational photography for diverse skin tones. Some camera manufacturers are finally including darker-skinned models in their test images and calibration processes.

But let’s be clear: This is happening in 2025. We’re talking about basic competence in capturing human skin tones in 2025. The fact that this is considered innovation—that companies are getting praised for finally doing what should have been standard from day one—tells you everything about how deep this bias runs.

The Beauty Industry’s Role in This Mess

While camera companies were optimizing for Shirley, the beauty industry was selling skin lightening creams, “brightening” serums, and foundation shades that stopped at “medium tan.”

The message was consistent across both industries: Lighter is better. Lighter is more beautiful. Lighter is what the technology serves, what the products enhance, what the culture values.

Photography became another tool in that arsenal. Bad photos of darker skin weren’t seen as a technology failure—they were seen as proof that darker skin was less photogenic, less beautiful, less worthy of being captured well.

And when people internalized that message, they bought the lightening creams. They used the filters that erased melanin. They stayed out of photos or spent hours trying to “fix” images that were broken by design, not by their appearance.

The camera’s bias became beauty culture’s weapon.

What This Means for You Right Now

If you’ve ever looked at a photo of yourself and thought “I don’t look like that,” you’re not wrong. If you’ve noticed that your skin looks amazing in the mirror but flat on camera, you’re not imagining it. If you’ve felt like you have to work twice as hard to get a decent photo compared to your lighter-skinned friends, that’s because you do.

None of that is your fault.

Your skin isn’t “hard to photograph.” Your features aren’t “difficult to capture.” You don’t need a filter to make you beautiful.

What you need is technology that was designed with you in mind from the beginning. And we’re just now—in 2025—starting to see that happen.

How to Get Better Photos Right Now

While we wait for the entire industry to catch up, here’s what you can do:

Lighting is everything. More light is usually better for darker skin. Natural light near a window works great. If you’re using artificial light, make sure it’s bright enough and diffused (not harsh and direct).

Avoid auto settings when possible. Your phone’s camera is making assumptions based on biased training data. Manual controls let you override that. Increase exposure. Adjust shadows. Take control.

Edit with intention. Don’t just hit “auto enhance” and call it done. Learn which adjustments actually serve your skin tone. Lift shadows. Preserve highlights. Adjust white balance. Make the technology work for you instead of against you.

Use tools built for you. Google Real Tone, newer iPhone portrait modes, apps specifically designed for diverse skin tones—seek out technology that actually sees you.

Demand better. When a camera or app fails you, say something. Leave reviews. Contact companies. Make noise. The only reason things are changing now is because people refused to accept “that’s just how cameras work” as an answer.

The Bigger Picture

This isn’t just about photography. This is about who gets to be seen, who gets to be beautiful, who gets to be the standard against which everything else is measured.

For decades, the imaging industry made a choice: white skin matters most. Everything else is secondary. Optional. An afterthought.

That choice shaped beauty standards, influenced how we see ourselves, affected everything from casual snapshots to law enforcement surveillance.

And it was never about aesthetics or technical limitations. It was always about whose image was considered worth capturing accurately. Whose beauty was considered worth preserving. Whose face was considered “normal.”

The camera doesn’t lie—but it was built to tell a very specific truth. And that truth was: you’re only beautiful if you look like Shirley.

We’re finally starting to dismantle that lie. To build imaging technology that sees the full range of human beauty. To create tools that serve everyone, not just the people who’ve always been served.

But we’ve got a long way to go. And every photo that comes out underexposed, every facial recognition error, every beauty filter that defaults to “lighten” is a reminder that the legacy of Shirley cards and biased algorithms is still shaping our world.

The Future We’re Building

Imagine cameras that capture rich, deep skin tones with the same care they give pale complexions. Imagine beauty apps where “enhance” doesn’t mean “erase melanin.” Imagine facial recognition systems that actually see Black faces as clearly as white ones.

That’s the future we should have had from the beginning. That’s the future we’re fighting for now.

Not because we need the validation of being photographed well—we know we’re beautiful. But because technology shapes culture, and culture shapes how we see ourselves and each other. When the cameras can’t see us, it’s easier for people to dismiss us. When the algorithms fail us, it’s easier to deny us access, rights, safety.

Getting imaging technology right isn’t just about beauty. It’s about visibility. Recognition. Humanity.

And we’re done waiting for the industry to figure that out on their own.

The next time you take a photo and it doesn’t capture your beauty accurately, remember: The problem isn’t you. It never was.

The problem is a system built on a foundation of Shirley cards and white skin as default. A system that’s finally starting to change—not because the industry suddenly grew a conscience, but because we demanded better and refused to accept being erased.

Your skin is beautiful. The camera just needs to catch up.